Apple Vision Pro, History and Future of Computing Platforms

Apple made one of the biggest announcements in its history this month by unveiling its mixed reality headset called Apple Vision Pro. This marked not only a new product in the company’s catalog but a potentially pivotal moment in computing platforms. The big tech used the term “Spatial Computing” to describe this new era in which users will be more immersed in the content than ever as software will be even more intertwined with the real world.

But will this really change the way people interact with computers? Where does AI fit into all of this? To have a clearer vision of where we’re going, it is always useful to look back at a bit of history.

Computing Platform, Explained

Computing Platforms are ever-evolving and thus its definition is not static. Some people argue that the origin of Computing Platforms dates back to 3,000 BC when the Abacus, the first instrument for counting and calculating, was invented. When we go to modern Computing Platforms (post 50’s) though, a generally accepted definition is that a Computing Platform is where software can be executed and run. In that sense, it includes hardware devices, such as an iPhone or PC, and operating systems that allow the software to interact with the hardware, such as Apple’s iOS and Microsoft’s Windows OS.

These platforms have changed a lot in the previous decades and below I will go over the main characteristics of each era of computing to finally share my vision for what is coming next.

Mainframe Era (1950’s to 1960’s)

The era of computers that took entire rooms. Mostly governments and large organizations used them for complex calculations. Only trained staff operated these machines directly.

This is regarded as the first era of modern computing platforms and it is known for its computers that would take entire rooms. Large organizations used these giant machines for complex calculations and data processing, such as consumer statistics, enterprise resource planning, and large-scale transaction processing.

Very differently from today, only trained staff operated computers, and the vast majority of people never directly interacted with the machines. The computing cost at the time was also very high which meant that users had to work on the code offline and revise it many times before executing so the chances of an error or bug happening were diminished.

Another peculiarity of this era was that each mainframe system required its own operating system, and it was impossible to share applications between systems or upgrade the system without rewriting the software applications. This would be equivalent to today’s Windows OS having to be adapted to every different PC hardware configuration out there.

Minicomputers (1960’s to 1970’s)

The era of computers that fit on a table. Computing cost was much lower and companies start having computers on site.

From 1965 to 1970, there was a massive drop in the cost of electronics which had several implications for the computing world. The invention of integrated circuits was not only the catalyst for this drop in costs but it also allowed for a drastic reduction in the size of machines so that a computer that would have filled a room in 1965 now took up the space of a table.

Organizations could now buy a small time-sharing computer that would provide in-house computing instead of paying subscription fees to get access to computation that was performed outside of their sites. The drop in computer cost also made it possible for regular people to directly interact with these machines, which sparked the interest of many students to consider computer science as a career path. Other advancements in the software side also allowed for the same Operating System to run on different machines without having to make any code adaptations. This would be crucial to get us to the huge software market that we see today.

Microcomputers or PC era (1980’s to 1990’s)

The era of user friendly user interfaces. Computers start getting more and more into people’s homes and coding becomes a hobby for many.

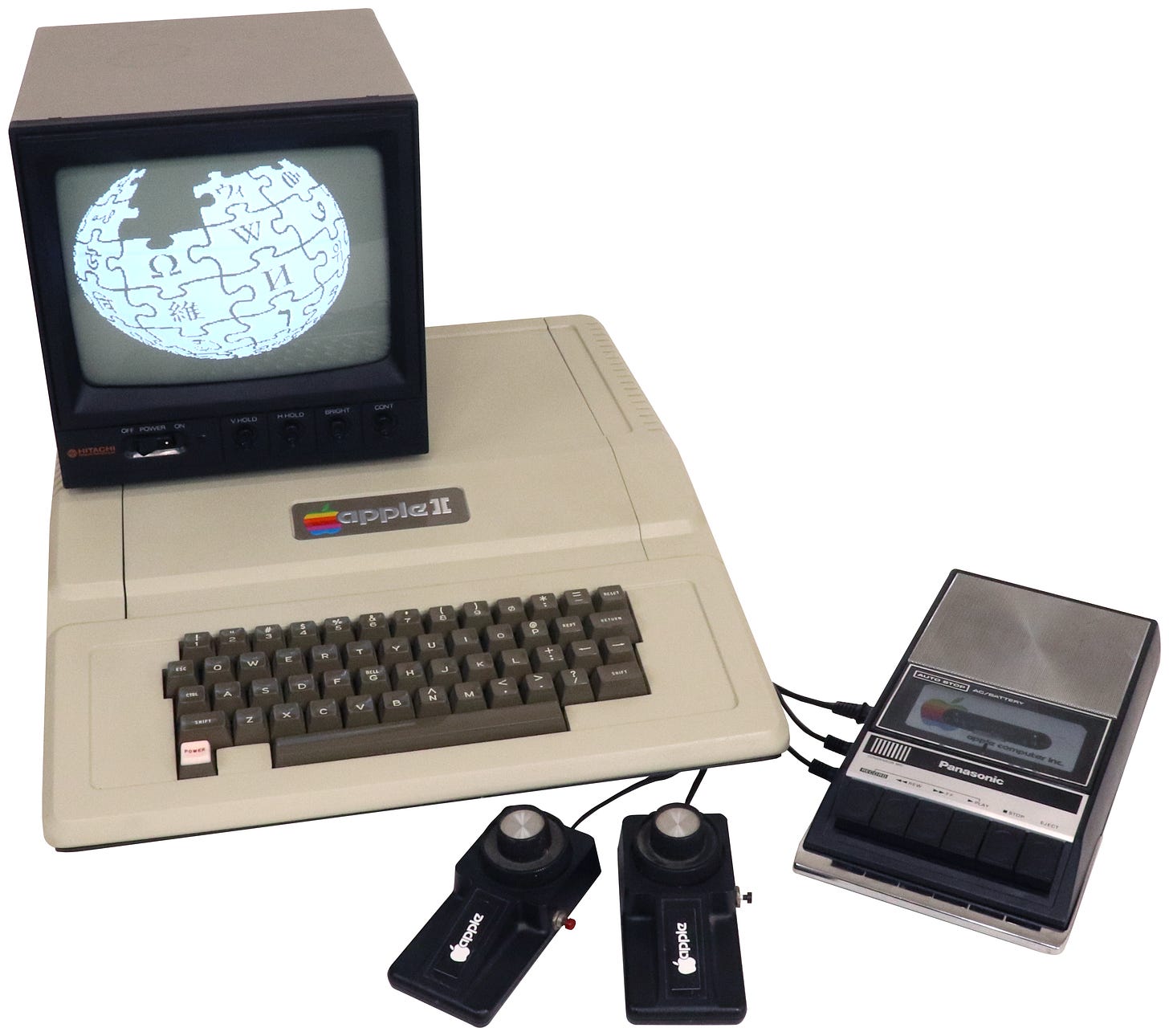

This is when Apple finally enters the stage. Technological advancements, most notably the invention of microprocessors, once again brought computers’ cost and size down, which allowed many more people to code and see computers as a hobby. Two of those people were Steve Jobs and Steve Wozniak, the co-founders of Apple Computers. They were both members of the Homebrew Computer Club, a group that would meet on a regular basis to discuss their interests in computer science, and where they first discussed their vision of a more user friendly home computer. This shared vision would bring them to design what was called the Apple I in Job’s garage. Its successor, however, was the actual game changer in the computer world. The Apple II is considered the most important of the first generation of commercial minicomputers because it was not just a toy for hobbyists but actually had a practical use for the general audience. One example was a software called VisiCalc, the first spreadsheet program launched.

Internet and Web Era (1990s-Present)

The era of connected computers. Activities that were once done only in the physical world start going online and done via computers.

The internet changed everything in the computing world. Before it, all the work that businesses, governments, and regular users did on computers was stored in the physical local machine and it was very hard to share this work or interact with other computer users.

Internet technology changed this and effectively connected computers all around the world, greatly increasing the number of use cases and user base of these machines. E-mail made it possible for users to send electronic letters to anyone in the world in seconds. Some early players started to appear in the online shopping space. Financial transactions and banking also started to slowly go online. All of these new applications, added to costs still going progressively lower and machines getting smaller, accelerated computer adoption to the point that almost every household had one.

Mobile & Cloud (2000’s-Present)

The era of computers that fit in your hand. The number of applications skyrocket. Cloud technology further democratizes computing power both to end users and organizations.

It was a historic moment when Steve Jobs got up the stage at Apple’s MacWorld event in 2007 and announced the iPhone. This device, considered the first smartphone to be released, could do everything that a typical computer could but in a form factor that was completely portable. People could use it to send e-mail, listen to music, browse the web, and much more, all with a portable device with a battery that would last most of the day. It is crazy to think that what in the 50’s took an entire room could then fit in a pocket and do much more.

Another innovation that systematically changed the computing landscape was cloud computing. This technology allows us to use the power of other computers that could be anywhere in the world for things we previously used to do on our own computers, such as storing data (images, video, documents, etc.) and using GPU power. This was important to level the playing field even further between large organizations and smaller companies since now anyone could start a software or any compute-intensive organization without heavy investments in infrastructure, among many other changes.

The Future - Spatial Computing and AI

It is easy to define eras by looking back in time and less so while you’re living them. However, I believe we are on the brink of a new computing era that is enabled by a combination of different innovations, both on the software and hardware fronts. Much like all the preceding eras, we will gradually see people changing how and with what frequency they interact with computers.

Starting with the how, we will require much less direct interaction with hardware devices to control computers. One of the biggest highlights of the new Apple device is its eye and hand tracking technologies that allow users to navigate through the UI basically by looking at things and moving their fingers. The typing experience is also fully done by the user’s voice, which eliminates the need for a physical keyboard. The Vision Pro seems to condense a full desktop experience in a headset device that will only get thinner and more compact with time.

Going in the opposite direction of what we have seen in the past decades of computing evolution, users will be able to spend much less time interacting with computers if they desire to. Recent advancements in AI, most notably generative AI, will allow everyone to have their own AI companions that will help them with a diverse set of tasks, both personal and work-related. An example, which I shared in one of my previous posts, is a salesperson having an army of bots (AI companions) that can crawl the internet in search of qualified leads, send personalized e-mails to those leads, follow up, etc. We are hopefully heading toward a future in which people will be much more efficient at work and thus have more time to use computers for entertainment or do things in the real world.

There is mounting evidence that we are entering a new computing era but are still in its early stages. Next-gen hardware adoption will likely be slow since spatial computing devices are still very expensive (Apple Vision Pro will cost $3.5k) and so far have not shown a killer app that is only possible in this new platform (like e-mail in the internet era). AI, on the other hand, is already seeing massive adoption and its applications will only get better. All in all, we have always been and still are moving in the direction of having computers help us more and more.

Dive Deeper

The History of Computers by Fresh and Felicia (video)

Vision Pro by Benedict Evans

Why AI Will Save the World by Marc Andreessen

Thought of the Month

“It’s shocking how bad things can be on their way to being good. It’s like when someone’s solving a Rubik's Cube, and it looks like they’re so far from solving it right before they solve it.” - Finneas + Rick Rubin podcast

This was an interesting quote I came across this month. I guess you never know what will be around the next corner so the best thing you can do is to work hard and be patient, even if things don’t seem to be working out.

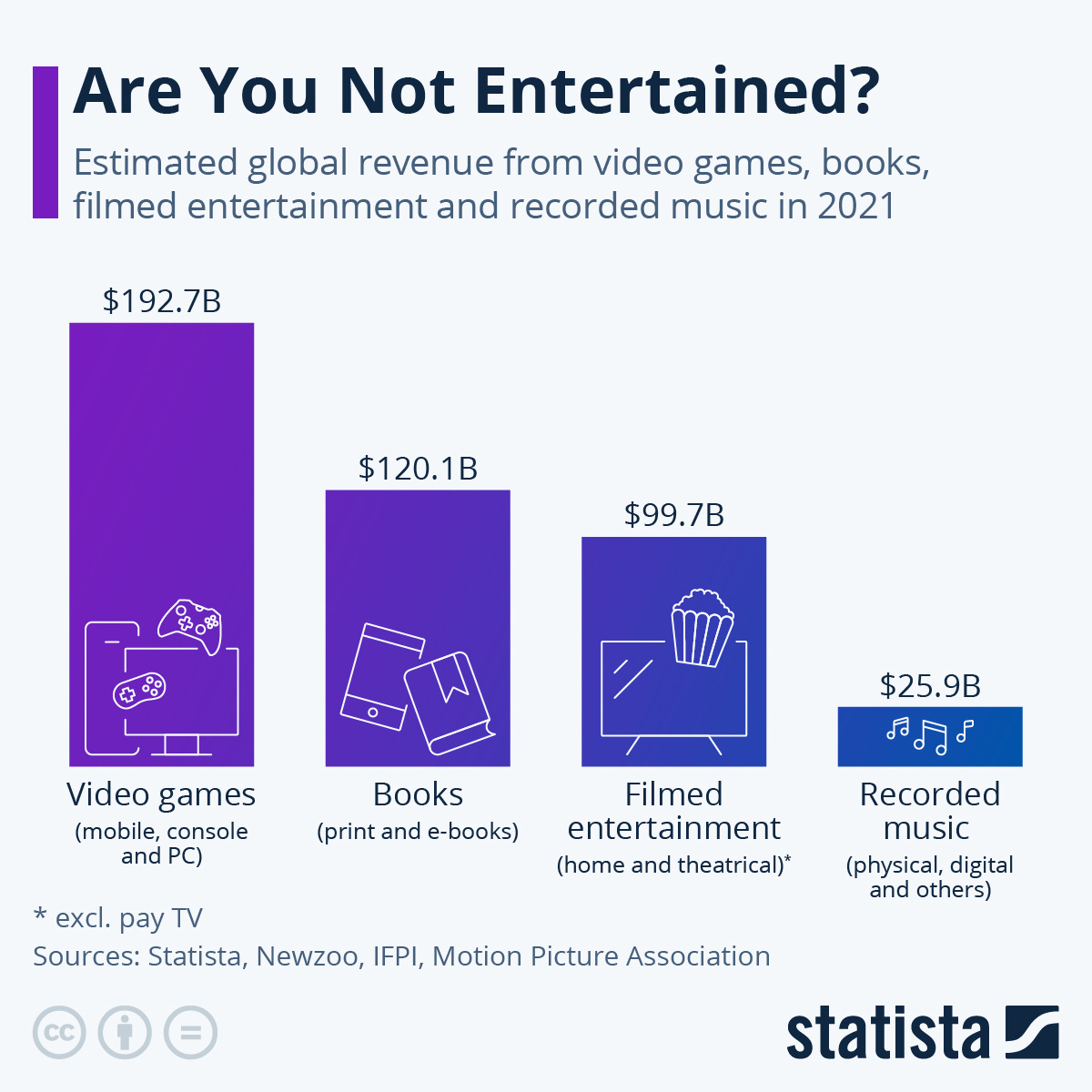

Chart of the Month

I always knew that gaming was huge compared to other entertainment industry segments but actually seeing the numbers impressed me. In 2021, video game-related revenue was almost double that of filmed entertainment revenue. No wonder why Microsoft is trying so hard to acquire Activision Blizzard and other big techs are also making big investments in the sector.